How to Use Your Core Values to Inspire, Retain, and Energize Your Team

For the last few decades, but especially so in recent years, people are seeking out more than just an income from their place of employment. More...

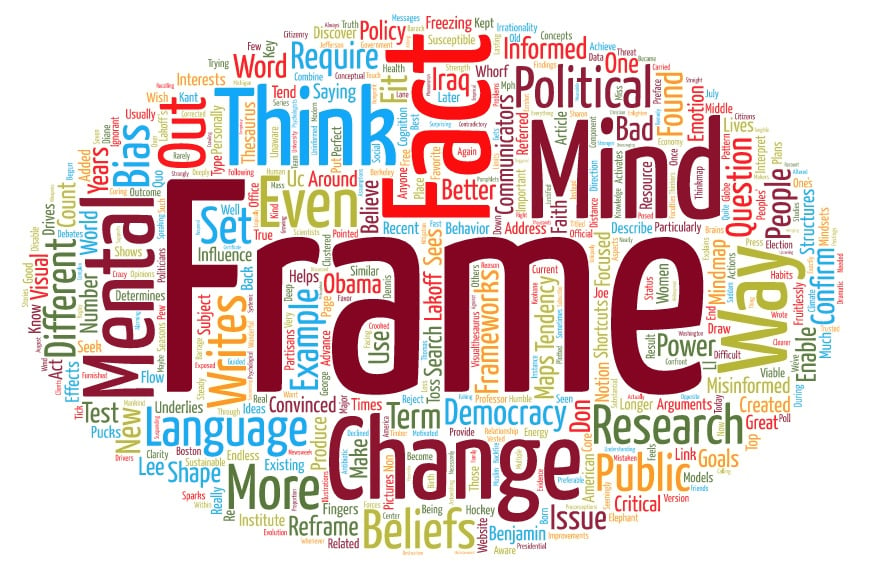

I can no longer count on my fingers the number of times I've searched fruitlessly for the perfect, convincing set of facts for changing minds to advance an issue. Really, I should know better. Facts are not what change peoples' mindsets. Frames are.

Language shapes the way we think, and determines what we can think about.

—Benjamin Lee Whorf

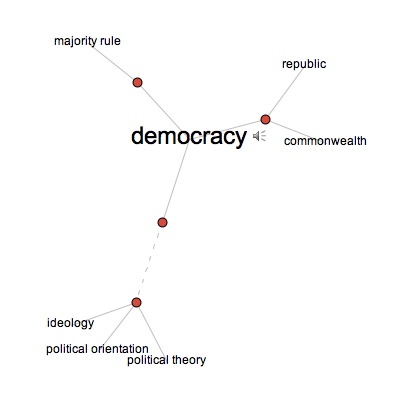

As communicators focused on change, we like to toss around different concepts of message framing and reframing much like hockey pucks in this office. But when out and about, I usually describe frames as shortcuts to mental models, with an emotional component added in. Personally, I tend to think of frames as mental pictures or mindmaps similar to those produced by Thinkmap Visual Thesaurus, a favorite website where you can type in a word or term and a mindmap is created with the word in the middle of the page and related terms clustered around it — and where distance from the key word shows the strength of the relationship.

Visual Thesaurus: Mindmap of Democracy

These conceptual maps draw me back to the writing of UC Berkeley Professor, George Lakoff.

"Frames are mental structures," Lakoff writes in the preface to Don't Think of An Elephant. "As a result, they shape the goals we seek, the plans we make, the way we act, and what counts as a good or bad outcome of our actions. Reframing is changing the way the public sees the world. Because language activates frames, new language is required for new frames. Thinking differently requires speaking differently."

Writing later in The Political Mind, Lakoff explains how politicians use language to frame their arguments. During the 2010 and 2016 election seasons, a seemingly endless barrage of framing messages kept Lakoff's research and writing top of mind for me.

Frames have different effects on our thinking. Some mental shortcuts enable us to achieve our goals — enabling frames provide what Dennis Sparks referred to as "direction, clarity, and a steady flow of energy." Other frames disable by freezing our mental status quo in place. So being aware of the frames created by language and the ideas they link to in our minds are critically important aspects of influencing others on, for example, a viable democracy, public health improvements, a sustainable economy, climate change mitigation, and any other major public issue facing us today.

Trying to change current frames and mental structures is difficult, particularly if we are unaware of their existence and power. Addressing frames, I believe, is at the core of the change communication needed to produce deep understanding of complex subjects and lasting, systemic change in mental and behavioral habits.

A great resource for communicators interested in change is the nonprofit FrameWorks Institute in Washington, D.C., where they combine research on framing with research on effective communication to address social problems and policies. FrameWorks has a saying, ”If the facts don’t fit the frame, it’s the facts people reject, not the frame.”

This pattern of thinking is sometimes referred to as confirmation bias, or the tendency to search for or interpret information in a way that confirms one's preconceptions. The official psychological term for this behavior is "motivated cognition" — a tendency to bias our interpretation of facts to fit a version of the world we wish to believe is true.

Political beliefs are even more susceptible to cognitive bias. Research has found that when psychologists confront political partisans with facts contradictory to their opinions, they become even more convinced of their existing beliefs. Diane Benjamin at FrameWorks has pointed out research findings that confirm this once again. The following is from, "How facts backfire. Researchers discover a surprising threat to democracy: our brains," By Joe Keohane, July 11, 2010 Boston Globe:

"It's one of the great assumptions underlying modern democracy that an informed citizenry is preferable to an uninformed one. 'Whenever the people are well-informed, they can be trusted with their own government,' Thomas Jefferson wrote in 1789. This notion, carried down through the years, underlies everything from humble political pamphlets to presidential debates to the very notion of a free press. Mankind may be crooked timber, as Kant put it, uniquely susceptible to ignorance and misinformation, but it's an article of faith that knowledge is the best remedy. If people are furnished with the facts, they will be clearer thinkers and better citizens. If they are ignorant, facts will enlighten them. If they are mistaken, facts will set them straight.

In the end, truth will out. Won't it?

Maybe not. Recently, a few political scientists have begun to discover a human tendency deeply discouraging to anyone with faith in the power of information. It’s this: Facts don’t necessarily have the power to change our minds. In fact, quite the opposite. In a series of studies in 2005 and 2006, researchers at the University of Michigan found that when misinformed people, particularly political partisans, were exposed to corrected facts in news stories, they rarely changed their minds. In fact, they often became even more strongly set in their beliefs. Facts, they found, were not curing misinformation. Like an underpowered antibiotic, facts could actually make misinformation even stronger.”

We've seen dramatic illustrations of this phenomenon in recent years. It was, to me, astonishing and alarming that an August 2010 Pew Center poll found a "substantial and growing number of Americans say that Barack Obama is a Muslim, while the proportion saying he is a Christian has declined." Yet Sharon Begley could tick off multiple such examples in a Newsweek article titled "The Limits of Reason: Why evolution may favor irrationality."

"Women are bad drivers, Saddam plotted 9/11, Obama was not born in America, and Iraq had weapons of mass destruction: to believe any of these requires suspending some of our critical-thinking faculties and succumbing instead to the kind of irrationality that drives the logically minded crazy. It helps, for instance, to use confirmation bias (seeing and recalling only evidence that supports your beliefs, so you can recount examples of women driving 40 mph in the fast lane). It also helps not to test your beliefs against empirical data (where, exactly, are the WMD, after seven years of U.S. forces crawling all over Iraq?); not to subject beliefs to the plausibility test (faking Obama’s birth certificate would require how widespread a conspiracy?); and to be guided by emotion (the loss of thousands of American lives in Iraq feels more justified if we are avenging 9/11)."

I've personally re-focused on calling out for our team, clients, friends, family — anyone within earshot — examples of the real and tangible influence of language and mental maps on the public policies, policy makers, and questions that touch our lives.

The tendency is to respond to an issue or question as it was posed. But if we want to change minds, often it’s important to reframe that question. There are nearly always old arguments, philosophical differences, and vested interests that shape the way a question gets discussed. But when it's an important issue, ask yourself: Are we even asking the right question?

How facts backfire – The Boston Globe

Pew Research Center Poll on Religion, Politics, and the President

Leading and Learning in K-12 Schools and Systems, Dennis Sparks

Editor's note: Originally published August 26, 2010; updated November 2, 2017.

The Change Conversations blog is where changemakers find inspiration and insights on the power of mission-driven communication to create the change you want to see.

© 2009- to present, Marketing Partners, Inc. Content on the Change Conversations blog is licensed under a Creative Commons Attribution-Noncommercial-NoDerivs 3.0 United States License to share as much as you like. Please attribute to Change Conversations and link to ChangeConversations.

Creative Commons License may not apply to images used within posts and pages on this website. See hover-over or links for attribution associated with each image and licensing information.

For the last few decades, but especially so in recent years, people are seeking out more than just an income from their place of employment. More...

You know nonprofit organizations need websites just as small businesses do, but you may be surprised to learn nonprofit sites can be more complex and...

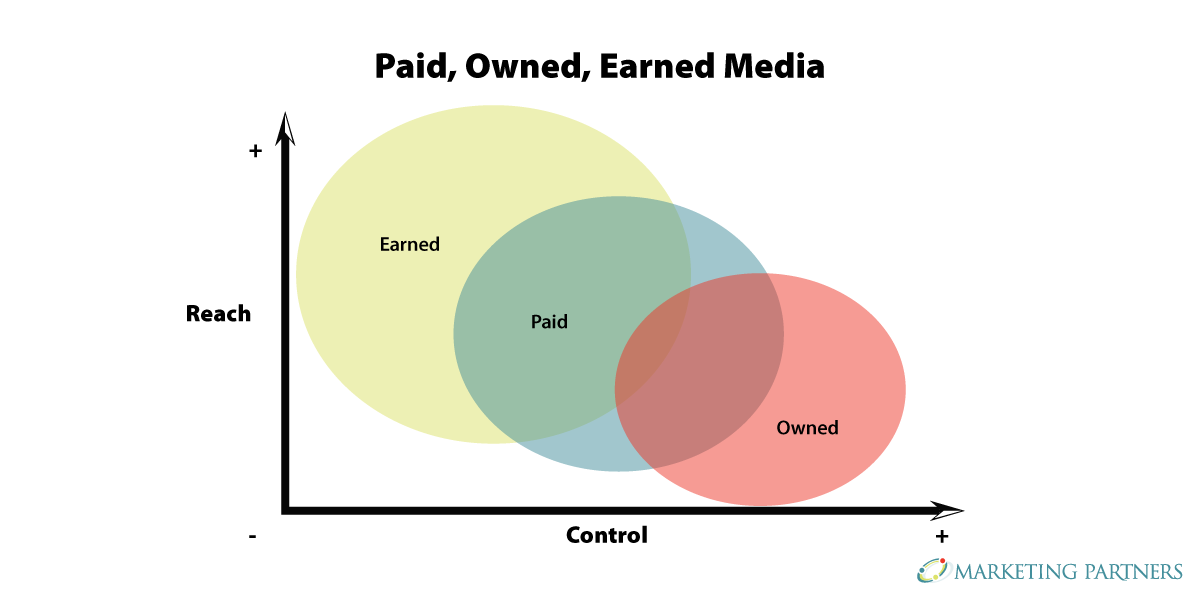

In today’s rapidly evolving media landscape, understanding where and how your story is told isn’t just strategic—it’s essential. How you communicate...